Amazon s3 pricing1/12/2024

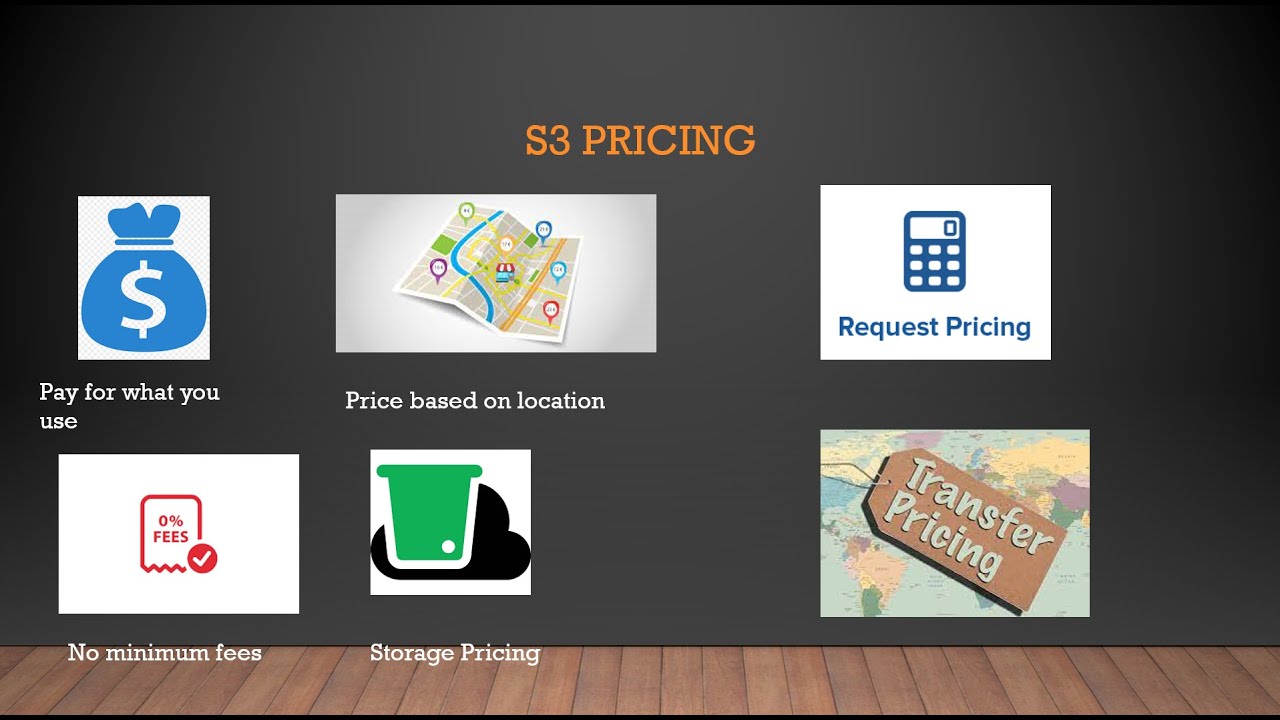

In case you feel confused about what storage method suits your needs best, check out the comparison table.ġ28 kb (you can store smaller objects, that will be charged as a minimum of 128 kb)Ĥ0 kb (you can store smaller objects, that will be charged as a minimum of 40 kb)ġ28 kb (you can store smaller objects, that will be charged as a minimum of 40 kb) Companies also use it for disaster recovery, but this happens less often. Glacier Deep Archive is a bucket for data chunks for regulatory requirements. Accessing the Glacier buckets takes up to 12 hours, so you need to think carefully about what objects to put in them. Usually, companies store data for compliance standards such as annual reports that can’t be deleted. Therefore the price is lower than for S3 Standard. This makes your data weak in the event of flooding or other natural disasters. It keeps information in one region with no databases replicated in other regions. It is a cost-effective solution as it helps to save on your data bill and not pay for what isn’t accessed anymore. Intelligent tiering is the transfer tool between FA (Frequent Access) and IA (Infrequent Access). You will pay less than for the Standard option, but data retrieval is more expensive. Usually, such it is for disaster recovery purposes. The data that is stored in S3 Standard needs to be reached daily and plays a vital role in everyday website operations. They can be diverse, so the service offers various options. S3 does its best to satisfy the customers’ needs. When using AWS S3, you may not only pay for storing objects but for management of storage, transfer acceleration, making requests and data transfer. Keys serve for unique identification of the object within a bucket.One of the convenient features is that you can customize the metadata (the time of the upload, last modifications, etc.) They are created in a specific AWS region and operate only there. These are the main terms of the AWS S3 structure: S3 was created for data storage and retrieval.ĪWS S3 is available in the AWS regions of the US, Canada, Africa, Europe, the Middle East and South America. As well as in S3 Storage, AWS request charges depend on the region.These are the main terms of the AWS S3 structure:.I shouldn't need to go elsewhere within Amazon Web Services to get a dynamic website to work. You should be able to switch on dynamic websites in S3, and then it works. I would also like S3 to have the ability to do dynamic websites without the need to do reconfigurations outside of S3.

That is the only issue that I think they could fix with hosting on S3 as far as websites are concerned. You have to go somewhere to delete whatever is propagated and restart the propagation somehow. The process needed to show updates immediately is a problem. The files are available immediately when they are first deployed, but it takes some time when they make changes if you don't understand the simple procedure you need to follow for it to happen immediately. That is the only area where a newcomer might get frustrated with hosting on S3. If you don't know those steps, you will wait forever for your updates to take effect or be seen by the public. You need to do some things to make the files and updates immediately accessible. The information goes from the standard storage to the infrequent, and after 30 days it is moved to the glacier. I use the solution for life cycle management. Then I used the solutions batch processing with Lambda to create a pipeline that performs batch operations. I created a manifest file that specified which rows belonged to which files. I split the data into multiple 50 or 30-MB files to make it easier to process. If we have a Java application, it can take a long time to process two gigabytes of data. I also use Amazon S3 for batch processing. Two gigabytes is a lot of data, especially if we are adding or updating information. We use the Lambda function which is a Python program so the code body stays in the Amazon S3 bucket and when a response is returned from the backend server we can mask some of the information, keeping only the required information for the end response. The NGINX engine converts and secures the information before hitting the Cloudfront. For example, We have a proxy server in between the NGINX engine, and when a request hits the NGINX engine, it has a different format.

The solution masks or transforms the request so the backend can understand them. When a request reaches Cloudfront, we have to mask some information in the response by transporting it. We use the solution to put our static web pages on the backend servers when we use Cloudfront if a transformation is required.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed